This project is being conducted jointly between researchers at Gallaudet University (Deborah Chen Pichler, Julie Hochgesang, and others) and the University of Connecticut. SLAAASh is short for “Sign Language Acquisition, Annotation, Archiving and Sharing” though sometimes we instead use “SLAASh” to refer to our overall goal to “annotate, archive, and share” sign language video data from all ages. The ASL Signbank project is part of SLAASh, so check out the ASL SignBank page as well!

Language data usually needs to be transcribed in order to be machine-readable and usable for research. This is especially true for sign language data collected on videotape. Large corpora are available for many other languages, shared between different labs and projects for research use. Yet, no large and shared corpus exists for ASL. Our goal is to prepare a corpus of previously collected data on ASL acquisition to share with other researchers, in order to permit more researchers to conduct studies relating to ASL acquisition and use.

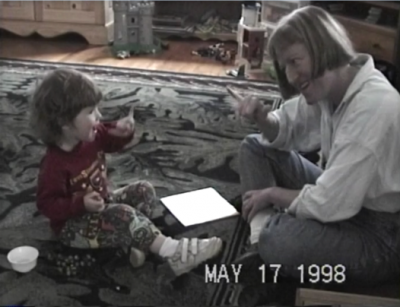

The video data to be used consists of Child ASL data from the CLESS project previously conducted by our lab. Because the data was originally collected for another purpose, one of our initial goals is to request consent for further data sharing from all past participants and seek ethically-sound, community-supported practices for decision making. Our lab has held focus groups for input from various types of stakeholders: Potential participants, family members, and other Deaf community members. Our primary concern is protection of individual rights, with research as an important but secondary concern.

The video data to be used consists of Child ASL data from the CLESS project previously conducted by our lab. Because the data was originally collected for another purpose, one of our initial goals is to request consent for further data sharing from all past participants and seek ethically-sound, community-supported practices for decision making. Our lab has held focus groups for input from various types of stakeholders: Potential participants, family members, and other Deaf community members. Our primary concern is protection of individual rights, with research as an important but secondary concern.

SLAAASh Data:

| Child | # Sessions | Age begin | Age end | Time observed (hrs:mins) | Est. # gloss tokens | Est. # child utterances |

| ABY | 79 | 1;04.22 | 3;04.07 | 73:43 | 130,000 | 16,600 |

| JIL | 83 | 1;07.03 | 3;07.09 | 79:16 | 119,000 | 17,800 |

| NED | 44 | 1;05.28 | 4;01.28 | 40:00 | 60,000 | 9,000 |

| SAL | 18 | 1;07.18 | 2;10.01 | 17:11 | 23,000 | 3,900 |

| Total | 224 | 210:10 | 332,000 | 47,300 |

Previous annotation of the ASL video data needs standardization in order to be usable by a wider shared audience. The ASL SignBank’s lexicon of ID glosses greatly facilitates this. (See project page for more information.)

Previous annotation of the ASL video data needs standardization in order to be usable by a wider shared audience. The ASL SignBank’s lexicon of ID glosses greatly facilitates this. (See project page for more information.)

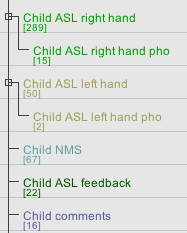

Our current day-to-day focus is on converting old annotation files to the new system, completing annotation of previously unfinished files, and conducting basic analyses of the data, including vocabulary counts, MLU, and IPSyn analyses. We plan to release each data set as it is prepared. We are already sharing our tools such as the ASL SignBank open source. Please see this website’s Instruments page (link) for our available scoresheets and scoring guides for these and other ASL measures.

For more information about SLAASh annotation

See this Figshare page posted by Julie Hochgesang.

Publications

Goodwin, Corina, Prunier, Lee, and Lillo-Martin, Diane. (2019). Parental Sign Input to Deaf Children of Deaf Parents: Vocabulary and Syntax. Proceedings of the 43rd annual Boston University Conference on Language Development, volume 1, 286-297. pdf

Chen Pichler, Deborah, Hochgesang, Julie, Simons, Doreen, and Lillo-Martin, Diane. (2016). Community Input on Re-consenting for Data Sharing. In Eleni Efthimiou, Stavroula-Evita Fotinea, Thomas Hanke, Julie Hochgesant, Jette Kristoffersen & Johanna Mesch (Eds.), Workshop Proceedings: 7th workshop on the Representation and Processing of Sign Languages: Corpus Mining, 29-34. pdf

Hochgesang, Julie, Pascual Villanueva, Pedro, Mathur, Gaurav, and Lillo-Martin, Diane. (2010). Building a Database while Considering Research Ethics in Sign Language Communities. Proceedings of the 4th Workshop on the Representation and Processing of Sign Languages: Corpora and Sign Language Technologies; 7th Language Resources and Evaluation Conference. pdf

Presentations

Lillo-Martin, Diane, Goodwin, Corina & Prunier, Lee. Measuring grammatical development in American Sign Language: ASL-IPSyn vs. MLU. Presented at the 15th Congress of the International Association for the Study of Child Language (IASCL); Philadelphia, PA; July 2020 postponed to July 2021.

Lillo-Martin, Diane & Chen Pichler, Deborah. ASL Pronoun Acquisition: Implications for Pronominal Theory. Generative Approaches to Language Acquisition – North America (GALANA); Reykjavik, Iceland; originally scheduled August 2020, postponed to May 2021.

Lillo-Martin, Diane (2020). Sign language acquisition and linguistic theory. Invited presentation; ABRALIN ao Vivo; online; May 2020.

Lillo-Martin, Diane, Hochgesang, Julie & Chen Picher, Deborah (2019). SOS: Doing Sensitive Open Science. Invited presentation, Workshop on Doing Reproducible and Rigorous Science with Deaf Children, Deaf Communities, and Sign Languages: Challenges and Opportunities; Berlin, Germany; September 2019.

Hochgesang, Julie, Catt, Donovan, Chen Pichler, Deborah, Goodwin, Corina, Kennedy, Carmelina, Prunier, Lee, Simons, Doreen & Lillo-Martin, Diane (2019). Sign Language Acquisition, Annotation, Archiving and Sharing: The SLAAASh Project Status Report. Poster presentation, Theoretical Issues in Sign Language Research (TISLR) 13; Hamburg, Germany; September 2019. pdf

Lillo-Martin, Diane & Chen Pichler, Deborah (2019). ASL Pronoun Acquisition: Implications for Pronominal Theory. Poster presentation, Theoretical Issues in Sign Language Research (TISLR) 13; Hamburg, Germany; September 2019.

Runesha, Birali, Tharsen, Jeffrey, Brentari, Diane, Goldin-Meadow, Susan & Lillo-Martin, Diane (2019). SAGA: Sign and Gesture Archive. Technology Showcase, 6th International Conference on Language Documentation and Conservation (ICLDC). Honolulu, HI; March 2019.

Lillo-Martin, Diane & Chen Pichler, Deborah (2018). Development of pointing signs in ASL and implications for their analysis. Poster presentation, Boston University Conference on Language Development (BUCLD). Boston, MA; November 2018.

Chen Pichler, Deborah, Gökgöz, Kadir & Lillo-Martin, Diane (2018). Points to self by Deaf, hearing and Coda children. International Conference on Sign Language Acquisition; Istanbul, Turkey; June 28, 2018. pdf

Goodwin, Corina & Lillo-Martin, Diane (2018). Aspects of Sign Input to Deaf children of Deaf parents. International Conference on Sign Language Acquisition; Istanbul, Turkey; June 27, 2018. pdf

Lillo-Martin, Diane & Chen Pichler, Deborah (2018). It’s not all ME, ME, ME: Revisiting the Acquisition of ASL Pronouns. Formal and Experimental Advances in Sign Language Theory (FEAST); Ca ‘Foscari University, Venice; June 18, 2018. pdf

Hochgesang, Julie, Crasborn, Onno & Lillo-Martin, Diane (2018). Building the ASL Signbank: Lemmatization Principles for ASL. Poster presentation, 11th edition of the Language Resources and Evaluation Conference; 8th Workshop on the Representation and Processing of Sign Languages: Involving the Language Community; Miyazaki, Japan; May 12, 2018.

Hochgesang, J.A. (2017). Ethics of working with signed language communities. Invited workshop lecture for “SIGN8 International Conference for Sign Language Users”. Florianópolis, SC, Brazil, Universidade Federal de Santa Catarina (UFSC), October 9-12.

Becker, Amelia. (2017.) “Selected finger combinations in American Sign Language: frequency, acquisition, and markedness.” CL2017 Pre-Conference Workshop 3: Corpus-based approaches to sign language linguistics: Into the second decade. University of Birmingham, July 24. pdf

Lillo-Martin, Diane, Goodwin, Corina & Prunier, Lee (2017). ASL-IPSyn: A new measure of grammatical development. Poster presentation, Boston University Conference on Language Development (BUCLD). Boston, MA; November 2017. pdf

Lillo-Martin, Diane, Prunier, Lee, Hochgesang, Julie, and Chen Pichler, Deborah. (2017.) Sign Language Acquisition: Annotation, Archiving and Sharing – Status Report. Poster presented at the 8th UConn Language Fest. pdf

Chen Pichler, D., J. Hochgesang, D. Simons & D. Lillo-Martin. (2016). Community Input on Re-consenting for Data Sharing. Presented at the 7th Workshop on the Representation and Processing of Sign Languages: Corpus Mining. LREC, Portorož, Slovenia, May 28.

Chen Pichler, Deborah, Hochgesang, Julie, Simons, Doreen, and Lillo-Martin, Diane. (2016). Reconsenting for Data Sharing. Poster presented at the 12th International Conference of Theoretical Issues in Sign Language Research. LaTrobe University, Melbourne, Australia, January 4-7. pdf

Research reported here was supported in part by the National Institute on Deafness and other Communication Disorders of the National Institutes of Health under Award Number R01DC013578. The content is solely the responsibility of the authors and does not necessarily represent the official views of the National Institutes of Health.